How to Build Software That Tells the Truth Before Users Do

Learn how to prevent system outages by understanding failure propagation, observability, bounded system design, and practical reliability engineering strategies for modern distributed systems.

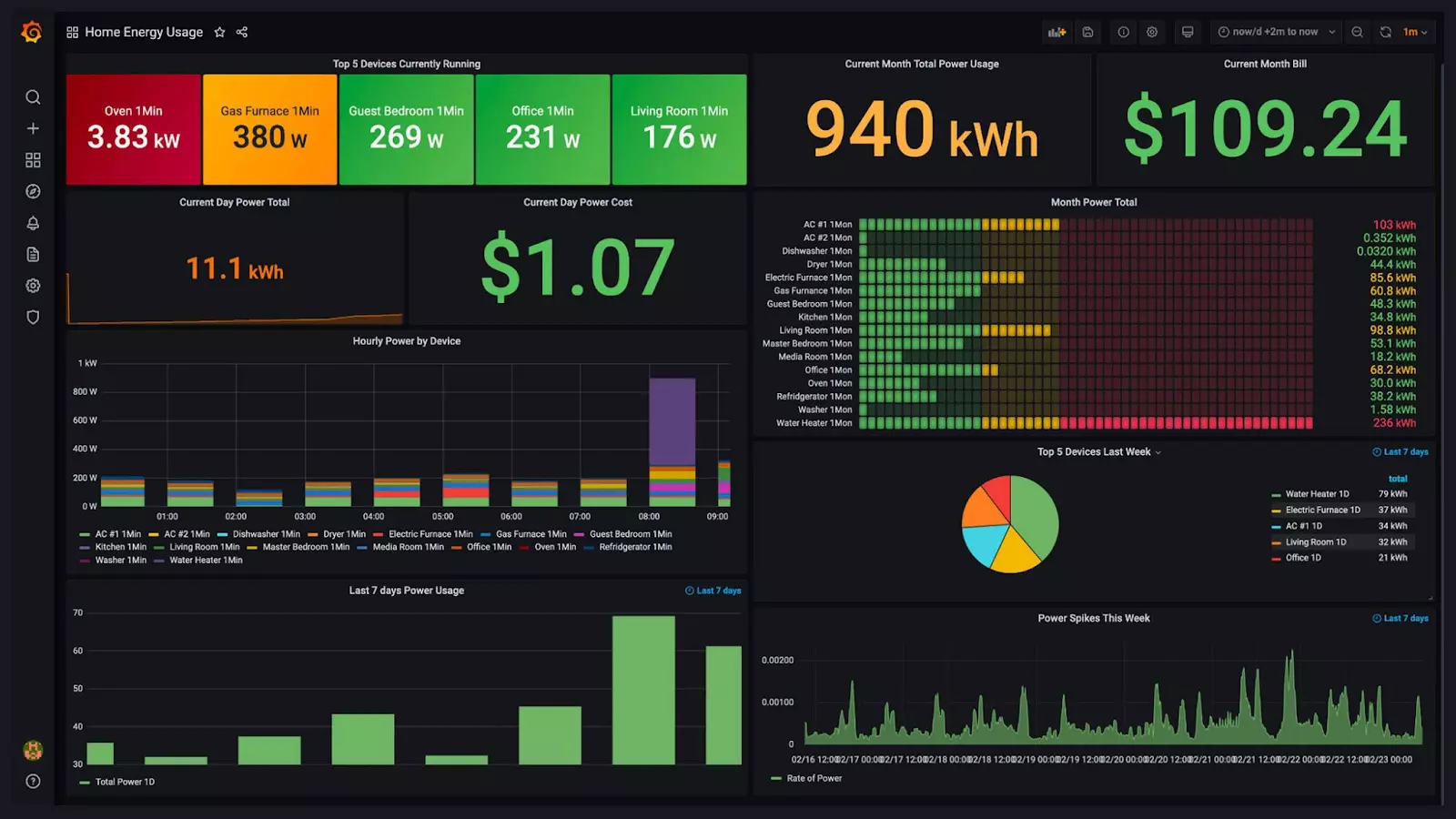

Engineers monitoring observability dashboards to detect latency spikes, system saturation, and early signs of distributed system failures.

Most modern outages don’t start with a dramatic crash. They start with a small deviation: a dependency slows down, queues grow, caches churn, retries multiply, and cost rises—quietly—until the system snaps under its own defensive behavior. If you want a practical, engineering-first way to reduce that pain, treat operational truth as a product requirement; a useful starting point is collected here because it frames credibility as something you build through evidence, not slogans. This article focuses on mechanics: how failure propagates, what to instrument so it explains “why,” and which design constraints prevent minor turbulence from turning into a full incident. The goal is shorter time-to-diagnosis and smaller blast radius, not unrealistic perfection.

Failure Has a Shape, and It’s Usually Predictable

Incidents follow repeatable physics. A downstream service becomes slower, upstream timeouts fire, and the system “helps” by retrying. Those retries add load exactly when the dependency is least able to handle it. The result is feedback amplification: a partial degradation becomes a platform-wide event. The critical concern lies in the fact that the early signs are often observable, but the system is not designed smartly to discern them and form a coherent story.

To break the pattern, stop treating failure as a binary state. A service can be alive yet unusable. Latency spikes can be more dangerous than errors because they consume threads, hold locks, and fill queues. The critical engineering question becomes: where does performance deterioration create more work for the system? Those are your amplification points. You don’t “monitor” them away—you constrain them away.

Instrumentation That Answers “Why,” Not Just “What”

Many teams have dashboards, logs, and alerts and still lose the first 30 minutes of an incident. That’s not a tooling problem; it’s a correlation problem. You need stable identifiers that connect an external symptom to an internal cause. When a user sees a timeout, you should be able to trace that request across services, queues, and background jobs and know which build and which feature-flag state was active.

Telemetry becomes useful when it encodes intent. Raw stack traces are rarely enough because incidents often involve saturation and timeouts rather than clean exceptions. Structured events that say “fallback served,” “rate limit applied,” “cache miss storm detected,” or “deadline exceeded at dependency X” cut through noise. High-cardinality context (tenant, route, version, flag state) is often the fastest way to isolate blast radius, but it must be placed where cost is predictable—typically sampled traces and structured logs with retention tiers—so you don’t explode metrics spend.

The first five minutes of your test must demonstrate whether your observability system can identify who experienced changes through which pathways while determining which dependency matches the current symptom and what steps should be taken to minimize damage. The testing process results in data collection that fails to support diagnostic development.

Design Constraints That Contain Damage

Reliability is mostly about boundedness. Bounded time prevents a slow dependency from consuming all your resources. Bounded concurrency prevents one hot tenant or one pathological route from starving everyone else. Bounded memory and bounded queues stop your system from storing its own future outage.

Deadlines operate as essential requirements because every incoming request needs a scheduled time allocation while every subsequent process must follow the existing time remaining. The system uses bulkheads to create resource boundaries which include thread pools and queues and connection pools so that one failure domain cannot access resources from another domain. The system uses circuit breakers as a protective measure which activates only when specific conditions demonstrate potential system failure through increasing latency percentiles and saturation signals and queue depth instead of using basic error measurements. Load shedding is the uncomfortable but often necessary move: when overloaded, refusing some work can be the only way to keep serving any work. Graceful degradation turns “all-or-nothing” into “core service survives,” but it requires pre-deciding what can be sacrificed without violating user trust.

All of these patterns are boring by design. That’s the point. Boring constraints are what keep the system coherent when everything is noisy.

A Single Operational Checklist That Actually Moves the Needle

If you only do a handful of things, do these—because they convert “mystery incidents” into “explainable failures” and reduce repeat outages.

The team needs to create golden signals for each essential user journey by defining four performance metrics which include latency, traffic, errors, and saturation. The team must define specific actions which will trigger alerts as operational alerts while informational alerts will remain operational.

You need to implement end-to-end tracing which includes persistent context sharing throughout HTTP and queue systems and background tasks while every trace should contain both build version information and the current state of feature flags.

The bounded retry system limits the number of attempts while implementing exponential backoff with jitter and maintaining a global deadline requirement and stopping retries when non-transient errors occur. The system requires all operations to be idempotent.

The system requires three overload controls which include per-tenant quotas and queue limits and load shedding for non-essential endpoints. The system requires testing through controlled stress tests to validate its performance.

The program needs to convert all unknown incident details into code because every term "we didn't know" functions as a complete new metric which creates a structured log field and trace attribute and guardrail system for the program.

Test for Reality: “Slow Is Down”

Correctness tests don’t reveal the failures that actually take systems out. Distributed systems often die from slowness, not from clean crashes. The system enters a retry loop after a timeout which increases system load until the increased load results in delayed response times. The testing process needs to include both latency measurement and partial system failure evaluation because these elements create real-world scenarios that need to be tested.

The most valuable experiments are controlled and specific. Inject latency into a single dependency and verify deadlines, fallbacks, and circuit breakers behave the way you think they do. Simulate a “blackhole” dependency that never responds to expose thread exhaustion and queue growth. Break only one zone, one shard, or one partition to see if the rest of the system degrades gracefully. Finally, test data-shape failure: oversized payloads, pathological queries, cache stampedes, and unexpected cardinality. These are common in production and rare in staging unless you force them.

When you run these experiments, the output you want is not just “it survived.” You want a coherent narrative in telemetry: where time was spent, where saturation occurred, which protection activated, and which user journeys were preserved.

The LLM Era Adds a New Failure Plane

If your system includes LLM components—either as a product feature or as part of operations—you inherit failure modes that look unfamiliar but are still manageable with the same discipline. Non-determinism means you can’t assume identical outputs; if an LLM is in a decision path, you must log model version, prompt template, safety settings, and retrieved context. Hidden dependency cost can turn “nice intelligence” into a latency anchor unless you enforce strict time budgets, caching, and hard fallbacks. Retrieval or prompt drift can masquerade as randomness, so prompts and indexes should be versioned artifacts with change control.

The operational rule stays the same: correlation plus boundedness.

The outcome traceability through model, prompt, and context dependencies which maintain time-defined boundaries, results in restricted complexity. Systems achieve reliability through failure prediction which defines limits and provides explanations, rather than through customer requests for more reliable performance. The system needs to establish time limits, manage concurrent operations, control retry attempts, and allocate system resources while telemetry must deliver immediate answers to "why" questions. Your continuous development of software which accurately demonstrates its actual condition will result in reduced time spent on estimation work and increased time spent on creating robust products.